Why SaaS execution models are changing

SaaS products have been built around deterministic workflows for the last two decades.

Business logic was explicit, system behavior was predictable, and value was delivered through well-defined features released on a controlled cadence. This model scaled due to the fact that teams could design, test, and operate software with confidence once it reached production.

But we’re starting to see a shift with AI agents.

Instead of following static rules, agent-based systems:

Reason over context

Invoke tools

Adapt to new information

Continue learning after deployment

This shift changes how value is created: outcomes improve through interaction and feedback over time, not just feature releases.

SaaS leaders are already seeing this transition take place, since AI appears on almost every product roadmap. Yet, execution is lagging. Enterprises realize that adding AI features to products built for deterministic software doesn’t make them automatically AI-native. Production-grade systems are becoming more difficult to ignore as agents move closer to core workflows.

What AI-native SaaS means - and why it’s winning

AI-native SaaS isn’t just about putting “AI features” onto an existing product and calling it innovation. At its core, it describes a fundamental shift in how value is being delivered: from software that follows rigid, deterministic workflows to systems that act and reason based on data.

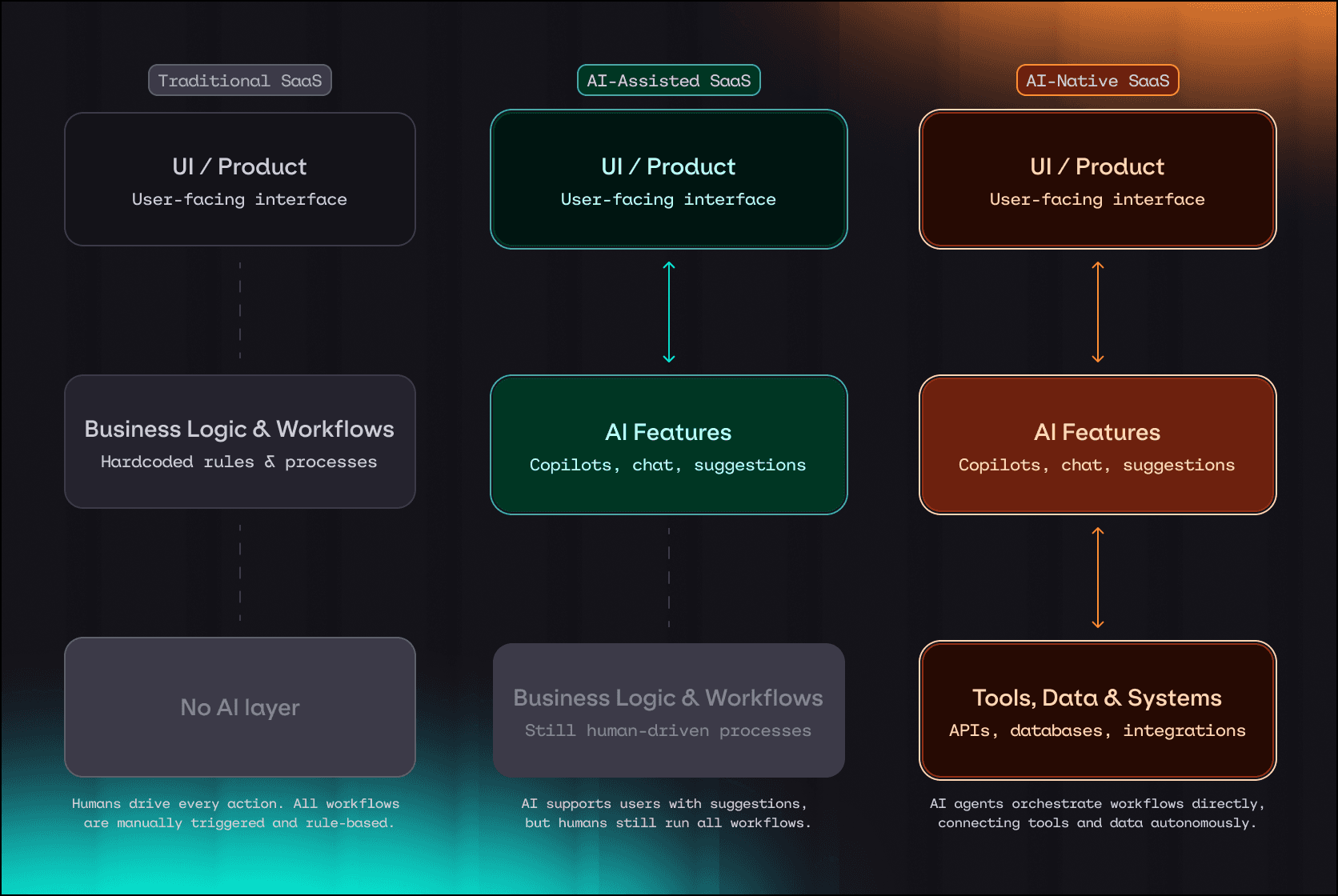

Take a look at the table below to see how traditional, AI-assisted, and AI-native SaaS differ:

Dimension | Traditional SaaS | AI-assisted SaaS | AI-native SaaS |

Core execution | Deterministic workflows and rules | Deterministic workflows with AI features layered on top | AI systems act as the execution layer |

Role of AI | None or minimal | Assists users (copilots, chat, suggestions) | Reasons, decides, and acts autonomously |

System behavior | Predictable and fixed after release | Mostly predictable with localized variability | Probabilistic and adaptive over time |

This transition is most visible in SaaS categories where workflows are knowledge-heavy and repetitive. Some examples include: developer tools, customer support platforms, sales and RevOps software, design and content tools, and internal analytics products.

We’re also seeing a pattern where AI-native companies are growing faster than traditional SaaS companies:

More than 80 funded AI-native SaaS startups, including Cursor, Replit, Lovable, and Jas AI, were built with agents as the execution layer from day one. As a result, many are reaching $10M ARR in roughly 12 months, compared to the typical 3–5 years for traditional SaaS companies. By contrast, incumbents like Salesforce, Notion, ServiceNow, and Canva have introduced agentic features, but often struggle to scale them without production-grade observability.

Some AI-native firms are even hitting $100m ARR in under two years with much leaner tools - a lot faster than any legacy growth curve.

Median growth for pure AI-native startups has been reported to be near 100% annually, compared with the typical 23% for traditional SaaS benchmarks.

What companies are doing today, and why results are mixed

In response to growing pressure, a lot of SaaS companies are starting to add AI features to their products, such as embedding copilots into dashboards or wrapping prompts around routine tasks. AI experimentation has become widespread, but turning pilots into production success remains elusive.

Even after significant investment, the reality is that only a small fraction of generative AI copilots produce measurable business impact, with about 95% of enterprise AI implementations falling short in 2025. What’s more is that 70-85% of AI projects fail to meet expected goals or drive value at scale, as they often don’t graduate beyond limited use cases.

Part of the disconnect lies in how these efforts are structured. Many AI features are built on stacks and product assumptions designed for deterministic software. In such models, systems aren’t designed for continuous adaptation or real-time feedback, which are crucial when AI systems evolve as models and data change.

This pattern explains why so many SaaS enterprises find their AI features stuck in pilot purgatory, instead of scaling into core product value. The technology’s potential is real, but realizing it consistently requires moving beyond isolated features toward systems that can be monitored and improved as part of everyday operation.

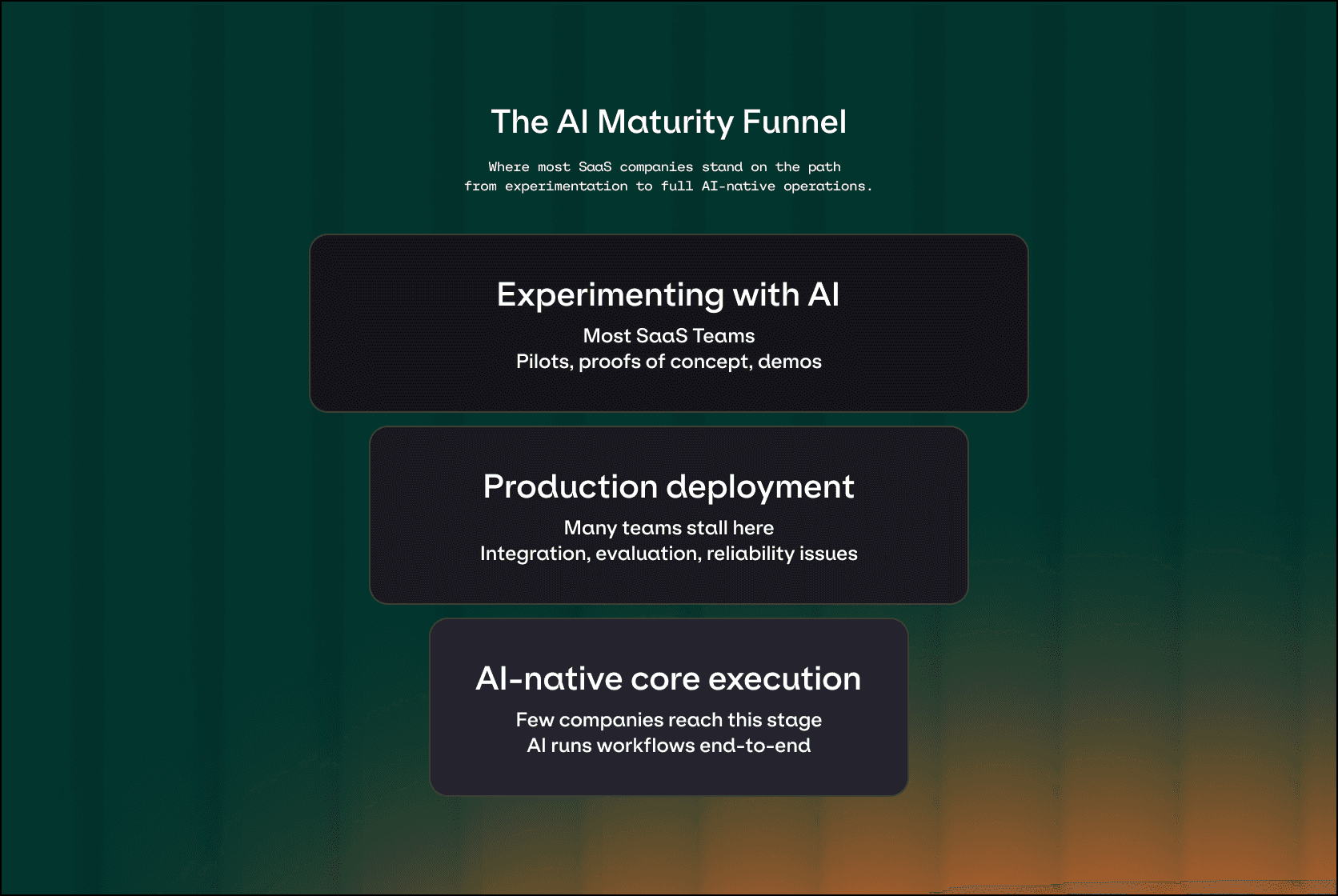

The trend shows up clearly when you look at how adoption drops off across stages.

Stage | Adoption among traditional SaaS | Production success | Primary blocker |

Experimenting with agents | Majority of enterprises actively experimenting or planning AI agents | Only a small fraction convert pilots into production ROI | Integration complexity and poor data quality |

Production deployment | 51% have agents running while 78% plan near-term | Around 1-in-3 struggle to operationalize despite strong pilots | Lack of lifecycle management (evaluation, observability, governance) |

AI-native core deployment | Rare and mostly limited to AI-native startups | Consistently higher growth and faster iteration | Legacy architecture not designed for autonomous, probabilistic systems |

Without continuous evaluation tied to real usage, teams have no reliable way to improve accuracy, handle edge cases, or evolve AI behavior as part of the product.

Why AI initiatives stall in production (SaaS-specific reality)

For SaaS product teams, AI initiatives rarely fail at launch.

They fail after deployment.

One early challenge is that AI feature behavior changes without clear releases. Small changes to prompts or data can materially alter outputs, often without a versioned deployment. Consequently, planning and testing becomes drastically more difficult than in traditional SaaS environments.

At the same time, edge cases multiply across customers. In multi-tenant products, what works for one segment may degrade for another. To make matters worse, these failures are rarely obvious bugs. They show up as subtle drops in quality or trust that are hard to trace, and harder to fix.

As uncertainty grows, roadmaps slow. Teams become more cautious about shipping changes, support burden, and costs become less predictable as usage scales. Governance and compliance concerns also rise as AI systems interact more deeply with customer data and workflows.

66% of AI teams lack the tools needed to meet business goals, and 71% lack confidence in their AI solutions once deployed.

The urgency: Why traditional SaaS companies must adapt now

AI-native entrants are resetting expectations for what SaaS products can deliver. Faster iterations, higher quality outputs, and systems that improve after deployment are no longer seen as experimental advantages. Instead, they’re becoming the baseline.

For traditional SaaS companies, the cost of waiting isn’t neutrality. It’s falling behind competitors whose products learn faster, adapt to edge cases more effectively, and expand into new use cases without relying on rigid release cycles. What starts as a gap in capability often turns into a gap in customer trust and expansion potential.

AI investment continues to accelerate, while enterprises push to consolidate tooling and reduce operational overhead. Buyers are increasingly evaluating products not just on features, but on how well they operate AI in production. Incremental AI additions are proving to be insufficient in markets where AI-native competitors are built to evolve continuously.

The practical path: how SaaS companies become AI-focused

Becoming AI-native won’t be a single step for most SaaS organizations. It requires a staged transition that changes how products behave, how teams ship, and how systems are operated.

The companies that succeed don’t simply add more AI features. They change how AI is owned, evaluated, and improved over time. In practice, this transition tends to follow three distinct stages.

Stage 1→Stage 2: From features to workflows

We’ve seen a lot of SaaS companies start by introducing AI-assisted features like Copilot, summaries, search, or drafting. At this stage, AI improves usability, but it doesn’t run the product. Core workflows are still deterministic, and AI outputs are advisory instead of authoritative.

At this stage, the biggest blocker is fragmentation. Prompts are hard-coded into features, model access is scattered across teams, and failures are handled ad hoc. AI “works,” but only in narrow contexts, and iteration feels fragile.

To move forward, teams need to focus on making AI operable. That means centralizing how prompts and models are defined. Additionally, there needs to be a clear focus on establishing clear ownership between engineering and data teams, along with trying to capture basic usage and failure signals from real users.

Teams know this stage is working when AI features are consistently used, outputs are trusted enough to act on, and product teams can iterate on prompts or logic without breaking releases.

Stage 2 →Stage 3: From workflows to execution

In agent-powered workflows, AI systems coordinate multiple steps, call tools, and affect real outcomes. This is where SaaS products start to feel fundamentally different, and where most companies stall.

The challenge isn't just about building agents anymore. It’s about operating them.

Progress requires organizational change. Evaluation must be tied to real usage instead of static test prompts. Observability needs to show how agents behave across customers, not just whether requests succeed. Guardrails have to reflect business rules and compliance constraints, not just model safety.

When this stage is working, teams can detect regressions quickly, investigate failures with confidence, and iterate faster without losing trust. AI stops being a fragile experiment and starts behaving like a managed system.

Stage 3: AI-native systems

In AI-native systems, agents aren't just supporting workflows. They're the execution layer of the product. The system adapts continuously based on data, feedback, and changing requirements.

At this level, success depends less on individual models and more so on lifecycle discipline. Continuous evaluation, versioned deployments, safe rollback, and governance become core infrastructure.

Ultimately, mature teams start seeing reliability improve over time instead of degrading. Product improvements are driven by feedback loops.

One example is where Evergrowth moved from a prototype workflow to operating more than 50 AI agents in production, supported by structured prompt testing, monitoring, and collaboration across teams. The platform allowed for faster iteration and operational oversight, contributing to a 4× faster time-to-market and improved cross-team coordination as the system scaled.

Can teams skip stages?

In fast-moving categories like developer tools, customer support, or internal automation, some companies move quickly from Stage 1 to Stage 2. But skipping lifecycle foundations almost always increases operational risk.

The window to adapt is narrowing for SaaS teams

AI-native SaaS is quickly becoming the default delivery model for modern software. As AI systems move from experimental features into core workflows, the assumptions that shaped traditional SaaS products no longer hold.

For established SaaS companies, the window to adapt is narrowing. Incremental AI additions can create short-term momentum, but long-term success depends on the ability to operate AI systems reliably over time.

Ultimately, this shift isn’t about adopting a specific model or interface. It’s about building the infrastructure and operating discipline required to support continuous improvement at scale. To see how teams are putting these principles into practice, explore how Orq.ai helps teams operate AI systems across the full lifecycle.