The real bottleneck in AI agents isn’t intelligence

On paper, it seems like the building blocks for intelligent systems already exist. Enterprises are well aware that today’s foundation models can reason across context, generate code, and complete multi-step workflows.

Yet in production, many agents fail to meet expectations:

They behave inconsistently across users

They struggle with edge cases

They lose trust over time, even as models continue to improve

Interestingly, these failures rarely stem from a lack of model capability. Typically, they emerge from factors around the model. Things like how agents are designed, how changes are tested, how performance is measured, and how feedback is incorporated after launch.

In our experience, these responsibilities are disconnected in most organizations. The issue lies in the fact that each group (product teams, engineers, domain experts) works in its own tools, with its own feedback loop and success metrics. That means product lives in roadmaps and tickets, engineers in code and infra dashboards, and domain experts in CRM or ops tools.

Improvements don’t compound and every iteration feels riskier than it should, even as the underlying AI becomes more powerful and the system around it stays disconnected.

The missing layer isn’t a better model – it’s a collaborative system that lets teams see the same reality and shape agent behavior together.

What collaborative AI really means (and what it doesn’t)

Collaborative AI is often misunderstood as a feature layer. You might think of shared dashboards, comments, and human-in-the-loop review screens when you hear the term. But those are just surface features, and not what actually makes agents better.

True collaborative AI is about how teams shape agent behavior together with shared accountability. AI-augmented cross-functional teams are three times more likely to produce breakthrough ideas than teams working in isolation. When product, engineering, and domain experts operate around the same system, quality improves faster and compounds.

At the same time, there’s also a big risk in doing this poorly. While two-thirds of companies adopting AI agents report measurable productivity value, 46% mentioned they fear falling behind competitors. Early gains don’t last if teams can’t evolve systems together.

In high-quality agent systems, improvement doesn’t come from a single role. It comes from the interaction between product teams who define outcomes, domain experts who understand edge cases, and engineers who build the logic that turns intent into behavior. Each group holds a different piece of the system’s truth, and collaborative AI is how those pieces finally come together in one place.

Why high-quality agents require shared ownership across teams

One of the biggest reasons AI agents struggle in production is simple: no one truly owns them end to end.

Up to 85% of AI initiatives fail to reach full production, often because responsibility is scattered across product, engineering, data, and operations teams. Each group controls a different part of the system, but no one controls all of it at once.

Even as adoption grows, only 23% of organizations report scaling agentic AI enterprise-wide. Among those deploying agents, only about two-thirds see measurable productivity gains, while the rest remain stuck in disconnected pilots.

Product teams define what the agent should do, engineers decide how it does it, and domain experts see when it fails in real workflows.

But in most organizations, these perspectives never meet inside the system itself. They’re separated across tools, meetings, and handoffs. Hence, agent behavior becomes a cluster of decisions no single team can fully explain or improve.

High-quality agents can’t be built this way, and it ends up being one of the biggest bottlenecks.

When ownership is scattered in too many directions, teams default to defensive behavior:

Engineers hesitate to change prompts or models because they can’t see the business impact.

Product teams struggle to iterate because they don’t understand the technical constraints.

Domain experts stop reporting issues because nothing seems to change when they do.

Shared ownership breaks this cycle by giving teams both visibility and a clear way to act on it.

By aligning teams around the same traces and feedback loops, organizations turn quality into a collective responsibility, instead of a handoff problem. Improvements become visible, measurable, and reversible. This lets teams move faster without losing trust.

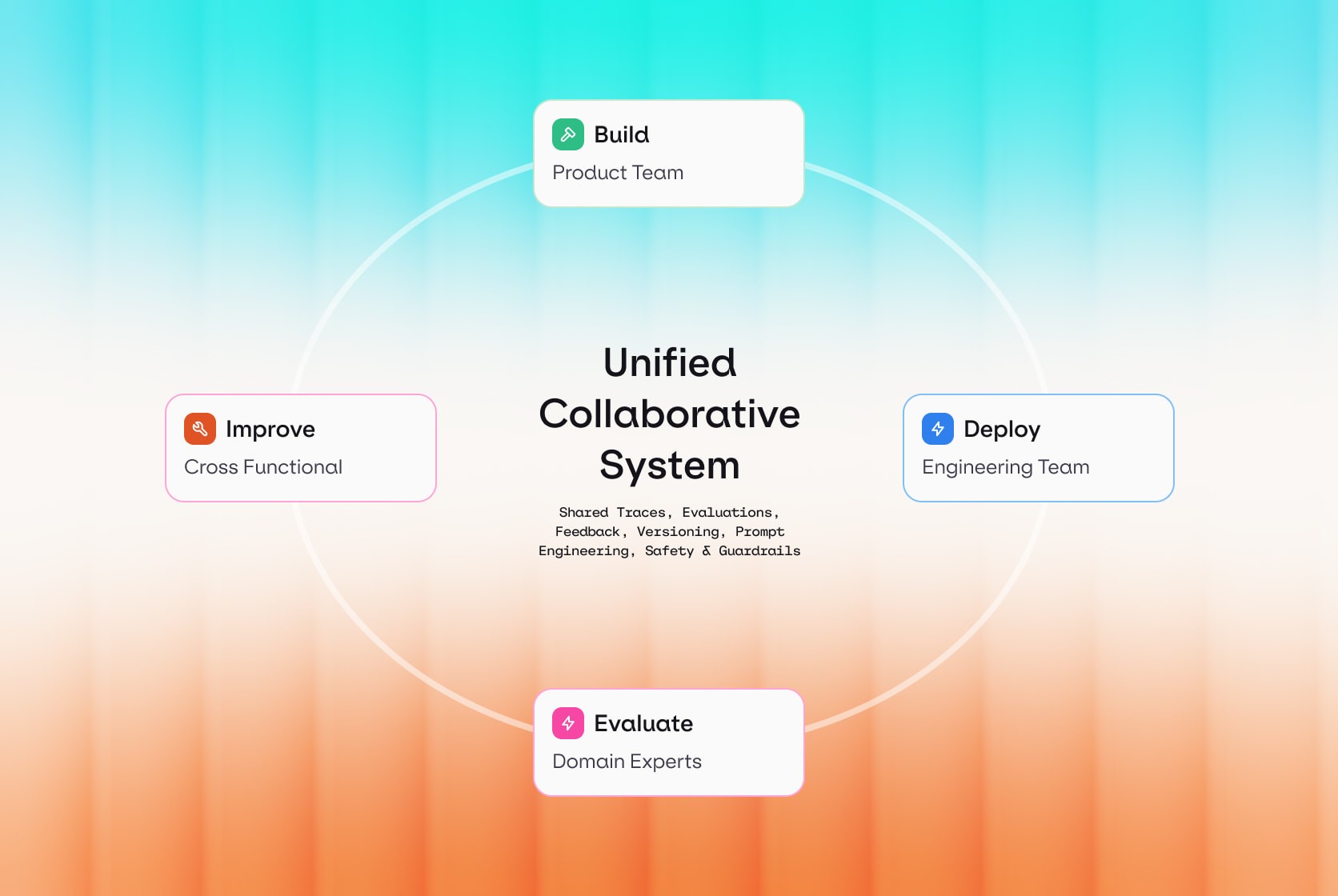

Collaborative AI across the agent lifecycle (build → deploy → evaluate → improve)

Collaboration only matters if it’s embedded into how agents are built and operated, and not added as a process layer on top.

Over two-thirds of enterprises say fewer than 30% of their AI experiments will ever scale into production. And over 80% of AI implementations fail within the first six months, showing that early success rarely survives real-world complexity.

A common approach we’ve seen is that each stage of the agent lifecycle is owned by a different group. Once the agent is live, these handoffs slow everything down and make quality hard to improve.

Collaborative AI changes this by connecting teams through the same lifecycle loop:

During build, product and domain experts define clear standards. That doesn’t mean just features, but outcomes. Engineers and ML teams translate those expectations into prompts, tools, policies, and workflows that can actually be tested and evolved.

During deploy, platform and security teams ensure changes are safe, versioned, and reversible. Instead of blocking iteration, they enable it by giving everyone confidence that new behavior can be rolled back if it degrades.

During evaluate, domain experts, product managers, and ML teams look at the same signals: traces, outputs, user feedback, and business metrics. For example, instead of support leaders logging issues in a separate system and engineers guessing what went wrong, everyone can see the same trace: which prompt variant ran, which tools were called, how long it took, and exactly what the user saw.

During improve, those insights feed directly back into the system. Prompts, logic, tools, and guardrails are refined together, then redeployed through the same controlled loop.

This shared lifecycle turns improvement into a team sport.

Instead of waiting for incidents or user complaints, teams continuously learn from real usage and evolve the agent as a living system. That’s what allows collaborative AI to scale quality - not by working harder, but by working together around the same feedback loop.

The next question is who does what inside that feedback loop.

Who owns what: collaboration roles in production-grade agents

When ownership is unclear, we’ve noticed that quality problems fall between teams. High-quality agents require shared ownership, with clear roles across the lifecycle.

Nearly 75% of corporate AI initiatives never reach full production due to misalignment between business and technical execution. Teams operate in parallel, but never converge on shared definitions of quality or success.

Only 5% of enterprise AI projects deliver measurable impact at scale, even though 80% explore tools and 60% launch pilots.

The missing link is shared accountability, backed by clear roles:

Product and domain teams define success: they decide what their standards and expectations look like in real business terms: what outcomes matter, which mistakes are unacceptable, and where automation is allowed or not. Their role is to translate business intent into measurable goals for the agent.

Engineering teams build and integrate the system: They design workflows, connect tools, manage state, and ensure the agent can operate inside real product flows. Their focus is reliability, performance, and maintainability.

ML and data teams own behavior quality: They evaluate outputs, define test sets, tune prompts and models, and track regressions over time. Their job is to make sure the agent improves instead of drifting.

Platform, security, and operations teams protect the system in production: They manage deployments, access controls, monitoring, cost, and compliance. They ensure agents can evolve safely without putting customers or the business at risk.

Together, these roles only work when they’re plugged into the same system of traces, evaluations, and deployments.

Collaborative AI means everyone is connected to the same feedback loop, with shared visibility into how the agent behaves and why. That’s how accountability becomes collective and how quality becomes sustainable.

Why collaborative AI breaks without lifecycle platforms

Collaborative AI depends on shared context. But the issue is most teams don’t actually share the same system. Everyone is working on the same agent, but through different versions of reality and slices of data.

Over 40% of agentic AI projects are predicted to be canceled by 2027, mainly because of operational uncertainty and unclear value.

This disconnect breaks collaboration at the exact moment it’s needed most. When a prompt changes, no one can see how it affected production. When quality drops, teams can’t trace the cause. When a model update causes regressions, there’s no safe way to test, roll back, or compare behavior.

Over time, this creates three main problems:

Decisions slow down, because every change feels risky

Trust erodes, because no one can fully explain what the agent is doing

Quality stagnates, because feedback can’t flow back into development

That’s why collaborative AI collapses without lifecycle platforms.

Lifecycle platforms connect development, deployment, evaluation, and feedback into a single operating system. The same agent definition, traces, metrics, and evaluations are shared across teams. That’s the layer most organizations are missing today, and the one platforms like Orq.ai are designed to provide so teams can collaborate on agents with a single source of truth.

From collaboration to scale: building better agents

High-quality agents rarely fail because teams lack models or tooling; they fail because collaboration breaks down once systems reach production.

As AI systems move into core workflows, success isn't just defined by what an agent can do in a demo. It's defined by how reliably teams can operate, measure, and improve that agent over time. This requires more than isolated tools or handoffs between roles. It requires a shared operating model where product, engineering, ML, and operations work from the same source of truth.

Collaborative AI is what makes that possible. It aligns teams around the full agent lifecycle, and not just around delivery milestones. When development, deployment, evaluation, and improvement are connected, every change becomes visible, measurable, and reversible - that’s the layer that turns collaboration into scale.

Book a demo with Orq.ai to see how collaborative AI can help you scale reliable, production-grade agents.